VMware Cloud Foundation (VCF) 9.1 is here — and it’s one of the most feature‑packed releases in years. This update isn’t just incremental; it’s a strategic modernization of compute, storage, networking, security, and operations across the entire private cloud stack.

Let’s break down the biggest enhancements and why they I think they matter.

Modernizing Infrastructure Economics with vSphere Foundation 9.1

VCF 9.1 brings several powerful updates to the vSphere layer, aimed at improving performance efficiency and reducing operational overhead.

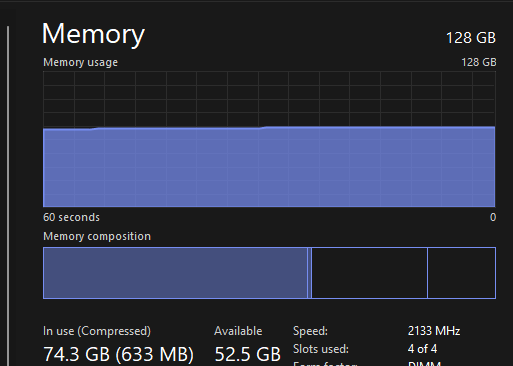

Enhanced NVMe Memory Tiering

Workloads that demand high throughput and low latency benefit from smarter memory tiering. NVMe-based memory tiers now deliver improved performance and flexibility. (And yes — many are hoping Secure Boot support lands here as well.)

Parallel Processing of DRS vMotion

DRS can now process multiple vMotions in parallel, dramatically reducing cluster balancing times. This is especially impactful in large-scale environments with frequent workload mobility.

Live Patching for TPM-Enabled Hosts

Live patching now works even on hosts with TPM enabled — a huge win for security-conscious organizations that previously had to choose between uptime and compliance.

Networking Updates: Scale, Simplicity, and Smarter Automation

VCF 9.1 introduces major networking enhancements that streamline operations and expand connectivity options.

Enhanced Day-2 VM Lifecycle Management

Networking changes for VMs — including NIC updates, IP changes, and security policies — are now easier and more automated.

Existing VLAN Connectivity via Distributed Transit Gateways

You can now bridge existing VLAN-based networks into VCF environments more seamlessly, reducing migration friction and simplifying hybrid designs.

Streamlined Firewalls & Automated Inter-VPC Security

Security policies between VPCs are now automated, reducing manual rule creation and improving consistency across tenants.

Terraform Provider Enhancements

Better support for tenant-level policy and content management means more automation and cleaner IaC workflows.

Simplified Workload Connectivity & Enhanced Network Scale

EVPN-VXLAN Interoperability

VCF 9.1 now supports EVPN-VXLAN interoperability with the physicalnetwork fabric. This is a major step toward fully integrated, fabric-aware cloud networking.

Network Assessment & VPC Planning

New tools and workflows help architects plan VPC layouts, assess network readiness, and avoid misconfigurations before deployment.

Optimize, Modernize & Protect Storage with vSAN in VCF 9.1

Storage gets a significant upgrade in this release, especially for environments focused on efficiency and resilience.

Encryption for vSAN Global Deduplication

Global dedupe is now compatible with data-at-rest encryption — a long-awaited capability for secure, space-efficient storage.

Enhanced Stretched Cluster Capabilities

Improved resilience and smarter failure handling strengthen business continuity for mission-critical workloads.

Automated Storage Policy Management

Policies now adjust automatically based on cluster configuration changes, reducing manual tuning and risk of misalignment.

Strengthening Zero Trust Security & Platform Resilience

Security is a major theme in VCF 9.1, with improvements across the stack.

Data-at-Rest Encryption for Global Dedupe

This ensures encrypted storage without sacrificing dedupe efficiency — a rare combination in enterprise storage.

Quick Patching for vCenter

Faster patch cycles reduce exposure windows and simplify maintenance.

Live Patching for TPM-Enabled Hosts

As mentioned earlier, this is a major operational win for secure environments.

Continuous Compliance & Integrated Cyber Recovery

VCF 9.1 pushes deeper into automated compliance and recovery workflows.

Compliance Monitoring & Desired State Remediation

The platform now continuously checks VCF components against desired state and can automatically remediate drift.

VPC Policy-Based Connectivity

Security and connectivity policies can now be applied consistently across VPCs, improving governance and reducing misconfigurations.

VMware Data Services Manager 9.1: Modern Databases for AI & Cloud

Microsoft SQL Server 2022 Now GA

SQL Server 2022 is now fully supported and generally available through DSM 9.1, enabling automated lifecycle management for modern database workloads — including those powering AI and analytics.

Want to See It in Action?

VMware has published a full VCF 9.1 video podcast series that dives deeper into the new capabilities:

Enough to do in my Homelab Starting with Upgrade and testing the new features!!

Figure 1: Holodeck Nested Diagram

Figure 1: Holodeck Nested Diagram

You must be logged in to post a comment.